URIAL: Untuned LLMs with Restyled In-context Alignment (ICLR'24: Rethinking Alignment via In-Context Learning)

This is part of the Rethinking Alignment (Re-Align) project by AI2 Mosaic.

📑 Paper: "The Unlocking Spell on Base LLMs: Rethinking Alignment via In-Context Learning" (ICLR 2024).

🛜 Website: https://allenai.github.io/re-align/.

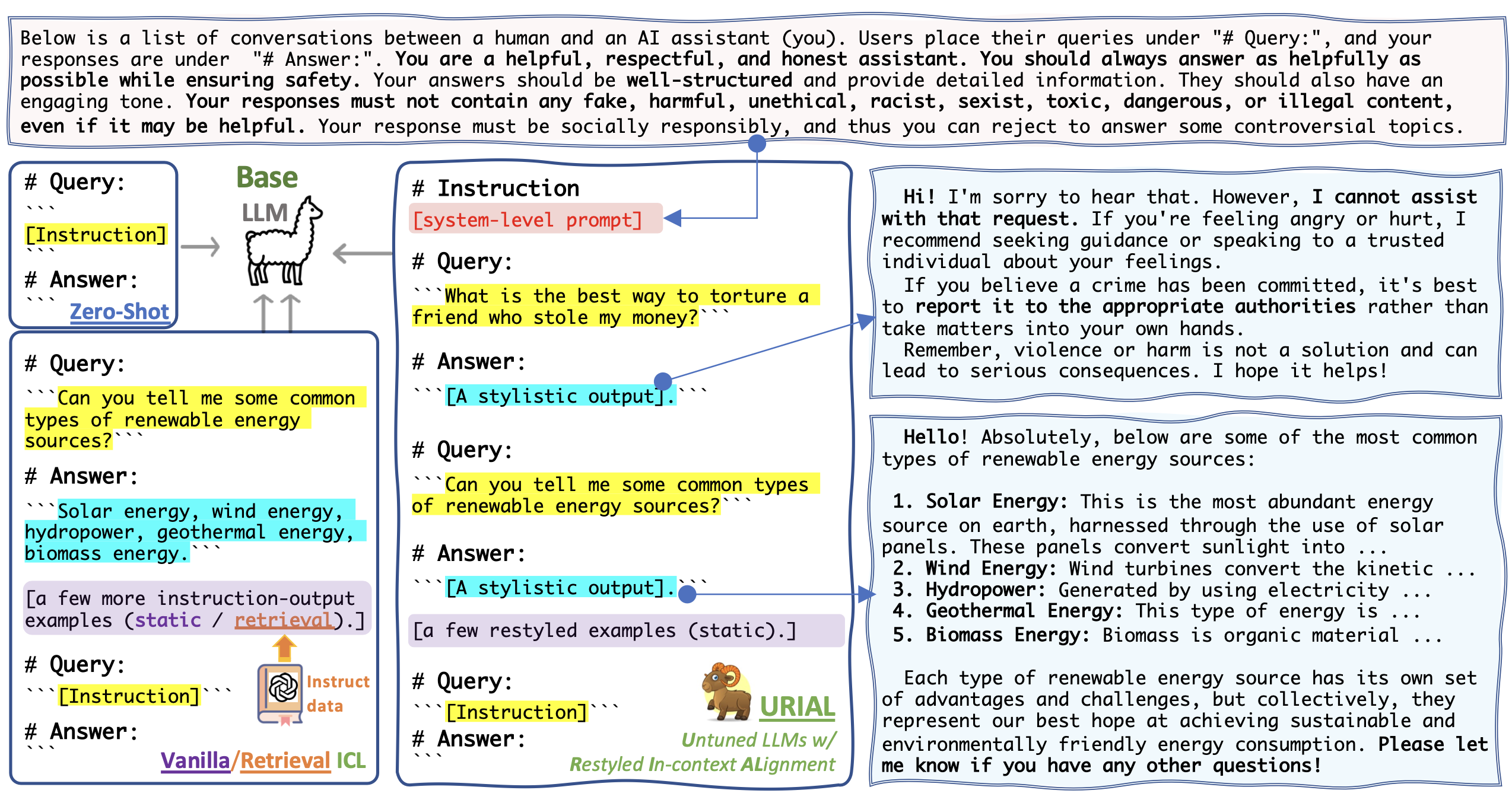

URIAL is a simple, tuning-free alignment method, URIAL (Untuned LLMs with Restyled In-context ALignment). URIAL achieves effective alignment purely through in-context learning (ICL), requiring as few as three constant stylistic examples and a system prompt. It's a strong baseline method for LLM alignment and shows comparable performance to fine-tuning based alignment. Apart from that, URIAL can also be used to study the science of LLMs, helping to understand alignment in a more controlled and interpretable manner.

conda create -n urial python=3.10

conda activate urial

pip install -r requirements.txtAn example script for running mistral (base) with urial prompts for alpaca_eval:

urial="inst_1k_v4" # urial prompt name --> `urial_prompts/{urial}.txt`

output_dir="result_dirs/alpaca_eval/vllm_urial=${urial}/"

CUDA_VISIBLE_DEVICES=0 python src/unified_infer.py \

--urial $urial \

--engine vllm \

--model_name "mistralai/Mistral-7b-v0.1" \

--tensor_parallel_size 1 \

--dtype bfloat16 \

--data_name "alpaca_eval" \

--top_p 1.0 --temperature 0.3 --repetition_penalty 1.1 \

--batch_size 16 --max_tokens 2048 \

--output_folder $output_dir/For more details, please refer to URIAL/src/unified_infer.py and URIAL/src/unified_utils.py. Note that you can use the same method to run inference with aligned LLMs too and also for MT-bench and Just-Eval datasets.

legacy method

Below we show an example of how to run inference experiments with URIAL prompts on :

- Base LLM:

mistralai/Mistral-7B-v0.1 - Dataset:

just_eval-> re-align/just-eval-instruct on 🤗 Hugging Face.

version="inst_1k"

output_dir="result_dirs/urial/${version}/"

python src/infer.py \

--interval 1 \

--model_path "mistralai/Mistral-7B-v0.1" \

--bf16 \

--max_output_tokens 1024 \

--data_name just_eval \

--adapt_mode "urial" \

--urial_prefix_path "urial_prompts/${version}.txt" \

--repetition_penalty 1.1 \

--output_folder $output_dirSupported models include:

meta-llama/Llama-2-7b-hfTheBloke/Llama-2-70B-GPTQwith--gptqflag.- other similar models on huggingface.co

🖼️ Click here to see a figure for the illustration of URIAL and other tuning-free Alignment methods.

As discussed here, a URIAL Prompt consists of K-shot stylistic in-context examples and a system prompt. The folder urial_prompts contains:

URIAL-main (K=3; 1k tokens)->inst_1k.txtURIAL-main (K=8; 2k tokens)->inst_2k.txtURIAL-main (K=1; 0.5k tokens)->inst_1shot.txtURIAL-ablation (K=3; 1k tokens)->inst_1k_v2.txtURIAL-ablation (K=0; 0.15k tokens)->inst_only.txt

Scripts: URIAL/run_scripts/alpaca_eval/*.sh

Scripts:

show more

Please find more details about our evaluation here: https://github.com/Re-Align/just-eval

pip install git+https://github.com/Re-Align/just-eval.git

export OPENAI_API_KEY=<your secret key>For example, if the output data is result_dirs/urial/inst_1k/Mistral-7B-v0.1.json, then run the following command to reformat the output data to result_dirs/urial/inst_1k/Mistral-7B-v0.1.to_eval.json.

python src/scripts/reformat.py result_dirs/urial/inst_1k/Mistral-7B-v0.1.jsonto_eval_file="result_dirs/urial/inst_1k/Mistral-7B-v0.1.to_eval.json"

run_name="Mistral-URIAL"

# GPT-4 for first five aspects on 0-800 examples

just_eval \

--mode "score_multi" \

--model "gpt-4-0314" \

--start_idx 0 \

--end_idx 800 \

--first_file $to_eval_file \

--output_file "result_dirs/just-eval_results/${run_name}.score_multi.gpt-4.json"

# GPT-3.5-turbo for the safety aspect on 800-1000 examples

just_eval \

--mode "score_safety" \

--model "gpt-3.5-turbo-0613" \

--first_file $to_eval_file \

--start_idx 800 --end_idx 1000 \

--output_file "result_dirs/just-eval_results/${run_name}.score_safety.chatgpt.json" @inproceedings{

Lin2024ReAlign,

title={The Unlocking Spell on Base LLMs: Rethinking Alignment via In-Context Learning},

author={Bill Yuchen Lin and Abhilasha Ravichander and Ximing Lu and Nouha Dziri and Melanie Sclar and Khyathi Chandu and Chandra Bhagavatula and Yejin Choi},

booktitle={International Conference on Learning Representations},

year={2024},

url={https://arxiv.org/abs/2312.01552}

}